Tech

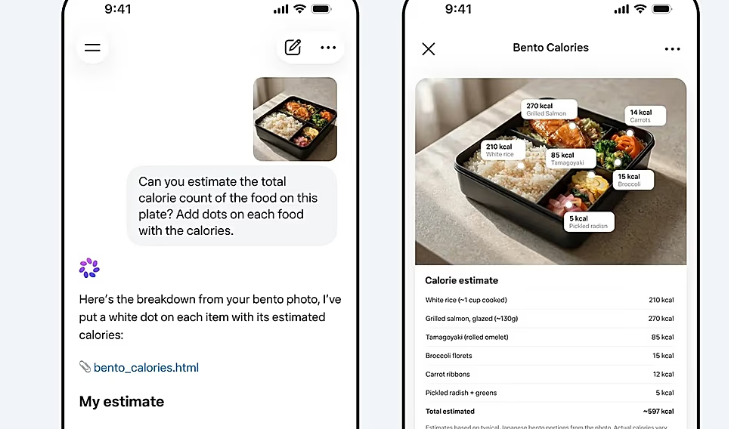

AI Tools Boost Paper Production but Raise Quality Concerns in Scientific Research

Large language models such as ChatGPT are increasing research output, particularly for scientists who are not native English speakers, but a new study warns that many AI-assisted papers are less likely to pass peer review.

Researchers at Cornell University, United States, analysed more than two million research papers posted between 2018 and 2024 on three major preprint servers, which host early versions of scientific work prior to formal review. Their findings, published in the journal Science, show that AI tools are reshaping how scientific papers are written and disseminated.

To identify AI-assisted papers, the team trained an AI system to detect text likely generated by large language models. Comparing papers posted before 2023 with those written after tools like ChatGPT became widely available, the researchers measured publication output and subsequent acceptance rates in scientific journals.

The analysis revealed a significant productivity boost for AI users. On a major preprint server for physics and computer science, researchers using AI produced about one-third more papers than those who did not. In biology and the social sciences, the increase exceeded 50 percent. The largest gains were seen among scientists whose first language is not English. In some Asian institutions, researchers published between 40 percent and nearly 90 percent more papers after adopting AI writing tools, depending on the discipline.

AI tools also appear to aid in literature review. Researchers using AI were more likely to identify newer studies and relevant books rather than relying on older, frequently cited works. “People using LLMs are connecting to more diverse knowledge, which might be driving more creative ideas,” said Keigo Kusumegi, a doctoral student and first author of the study.

Despite the productivity gains, the study highlights quality concerns. Many AI-written papers, while linguistically polished, were less likely to be accepted by journals. Papers written by humans that scored high on writing complexity were more likely to be accepted, whereas AI-generated papers with similar scores often failed to meet scientific standards.

“Already now, the question is not, ‘Have you used AI?’ The question is, ‘How exactly have you used AI and whether it’s helpful or not,’” said Yian Yin, assistant professor at Cornell and corresponding author of the study. Yin added that the widespread adoption of AI tools across disciplines—including physical sciences, computer science, biology, and social sciences—requires careful consideration by reviewers, funders, and policymakers.

The researchers stress that AI-assisted tools are reshaping the academic ecosystem, offering opportunities to improve productivity and access to scientific knowledge, but they also call for guidelines to ensure that the technology is used responsibly and that scientific contributions maintain their integrity.

As AI becomes increasingly integrated into research practices, the challenge for the scientific community will be balancing efficiency and innovation with rigorous evaluation standards to maintain the quality and credibility of published science.

Tech

Meta Launches Muse Spark, Its First Major AI Model in Nine Months

Meta has unveiled its first major AI model in nine months, following a $14.3 billion (€12.24 billion) investment spree and executive hiring push to rival OpenAI and Google. The American tech company introduced the model, called Muse Spark, on Wednesday, claiming it is faster and smarter than its previous technologies.

The company, founded by Mark Zuckerberg, invested $14.3 billion in Scale AI in June 2025 and recruited its CEO and co-founder, Alexandr Wang, to oversee Meta Superintelligence Labs, which houses teams working on foundational AI models. Zuckerberg also embarked on a hiring campaign, bringing in executives from competitors including OpenAI, Anthropic, and Google.

In a blog post, Meta said, “Over the last nine months, Meta Superintelligence Labs rebuilt our AI stack from the ground up, moving faster than any development cycle we have run before. This initial model is small and fast by design, yet capable enough to reason through complex questions in science, math, and health. It is a powerful foundation, and the next generation is already in development.”

Muse Spark is positioned as a significant upgrade over Meta’s last major release, Llama 4, launched in April 2025. The company highlighted that the model excels in advanced reasoning, particularly in scientific, mathematical, and medical queries. To improve its health advice capabilities, Meta worked with over 1,000 physicians to curate training data, aiming for more accurate and comprehensive responses.

The AI model will power the company’s digital assistant in the Meta AI app and website, with planned integration across Facebook, Instagram, WhatsApp, Messenger, and the Ray-Ban Meta AI glasses. A “contemplating mode” will gradually roll out, allowing multiple AI agents to reason in parallel on complex tasks. Meta’s technical blog noted this feature is designed to compete with high-level reasoning in models such as Gemini Deep Think and GPT Pro.

Zuckerberg emphasized on social media that Meta aims to build AI products that “don’t just answer your questions but act as agents that do things for you.” Unlike conventional chatbots, these AI agents operate autonomously, gathering information based on user preferences to assist without direct human commands.

One notable shift for Meta is the move away from open-source AI models. Unlike earlier releases, Muse Spark is not available for public download, meaning access to the technology is currently restricted. The company said the model is initially available only in the United States.

Muse Spark underscores Meta’s aggressive push into the competitive AI market, combining extensive investment, executive recruitment, and technical innovation to challenge the dominance of established players like OpenAI and Google.

Tech

OpenAI Urges Governments to Rethink Economy as AI Growth Accelerates

OpenAI has called on governments to rethink the foundations of the economy, warning that artificial intelligence (AI) could soon surpass human intelligence and drastically change how people work, live, and pay taxes. The company outlined its initial policy ideas on Monday, aimed at mitigating the economic disruption caused by rapid AI adoption in the United States and worldwide.

One key proposal is the creation of a public wealth fund that would give citizens a direct stake in AI-driven economic growth. According to the policy document, the fund could invest in diversified, long-term assets, including AI companies and broader firms adopting AI technologies, with returns distributed to all citizens.

The company also suggested that governments encourage businesses to launch four-day workweek pilot programs without any reduction in pay. This approach aims to balance the productivity gains provided by AI with the well-being of workers. Lawmakers are also urged to modernize tax systems by increasing taxation on corporate income and capital gains instead of labor income, which could be affected by AI-related job losses. The report proposes additional measures, such as taxing companies that replace human labor with automation.

OpenAI recommends that social benefits, including retirement pensions and healthcare, be provided through portable accounts that follow individuals across different jobs, industries, and entrepreneurial ventures. This model would help ensure continuity of support in a labor market increasingly influenced by AI.

These recommendations echo broader discussions among AI leaders about the future of work. OpenAI CEO Sam Altman and xAI’s Elon Musk have previously highlighted universal basic income as a potential necessity as traditional employment declines. Other tech leaders, including Nvidia’s Jensen Huang and Zoom’s Eric Yuan, have advocated shorter workweeks to distribute productivity gains from AI more evenly.

Concerns about AI’s long-term impact extend beyond economics. In January, Anthropic CEO Dario Amodei warned that superintelligent AI, capable of outpacing human decision-making, poses “existential danger.” He suggested tighter controls on the export of key technologies, such as semiconductor chips used to train large language models, as one way to manage the risk. Amodei also called for transparency laws requiring AI companies to disclose how they guide their models’ behavior.

OpenAI’s policy document represents an early step in urging governments to address the structural changes AI may bring. The proposals highlight the need to rethink traditional concepts of work, taxation, and social support as the technology continues to advance rapidly.

As AI continues to reshape global economies, policymakers and industry leaders face increasing pressure to develop strategies that protect citizens while fostering innovation and sustainable growth.

Tech

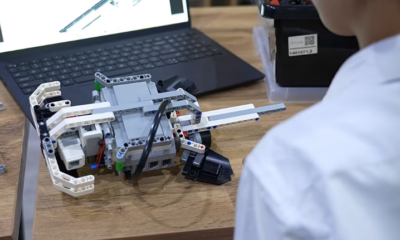

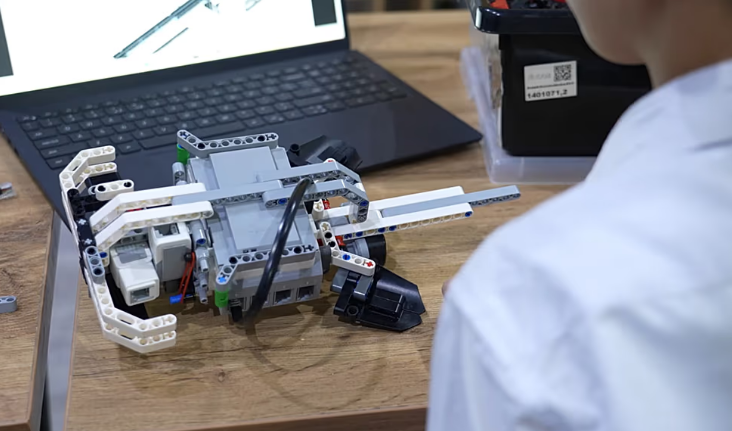

Uzbekistan to Produce Humanoid Robots in Partnership with South Korea

Uzbekistan has signed an agreement with South Korea’s ROBOTIS to launch humanoid robot production, marking a major step in its high-tech ambitions. At the same time, students across the country are learning robotics and programming, gaining skills that could prepare them for careers in the emerging industry.

The agreement, signed between the UzElTechSanoat Association and ROBOTIS, sets out plans to establish humanoid robot production within Uzbekistan, develop manufacturing infrastructure, and train specialists for the growing robotics sector. ROBOTIS, known for its humanoid platforms and smart robotic actuators, will support the creation of technological foundations and help prepare a workforce capable of designing and operating advanced robotic systems.

The initiative forms part of Uzbekistan’s broader push to build a domestic innovation ecosystem, combining industrial cooperation with education. Early exposure to robotics and programming is at the heart of this strategy.

In a robotics classroom, 12-year-old Mirkomil Shodiev demonstrates the impact of these programs. Using an EVO-3 educational robotics kit, he assembles and programs his own robot, controlling its movements through lines of code. “This was created by me,” he says. “You connect it to a computer, write code, and it performs tasks using the motor.”

Mirkomil began IT classes four months ago, learning Scratch and now studying Python, a programming language widely used in web development, automation, and robotics. He hopes to build websites and earn money in the future, reflecting the growing importance of digital skills in Uzbekistan’s economy.

The government’s Digital Uzbekistan-2030 strategy is expanding nationwide training in programming and digital skills. IT education centres and specialised academies are growing to meet rising demand for technology careers. At the Robot Academy, where Mirkomil studies, students aged eight to fifteen gain hands-on experience in programming, robotics, and engineering. “Our students create scientific projects, develop games, and build Telegram bots,” says teacher Navruz Shaydullayev. “Programming helps develop their thinking, logic, and intellectual abilities.”

Classroom projects emphasize translating digital commands into physical movement, a key principle behind robotics and industrial automation. Students learn to design, assemble, and control machines independently, building skills that can directly feed into the country’s industrial ambitions.

The partnership with ROBOTIS will extend these educational initiatives into the workforce, providing training for engineers, programmers, and technicians in humanoid robotics. Officials hope the program will strengthen Uzbekistan’s technological competitiveness and create highly skilled jobs in a fast-growing global sector.

For students like Mirkomil, the future is already taking shape. “In the future, I want to continue in this field,” he says. “After finishing the courses, I would like to study in Tashkent as well.” As Uzbekistan prepares to manufacture humanoid robots, classrooms across the country are quietly training the people who may one day build them.

-

Entertainment2 years ago

Entertainment2 years agoMeta Acquires Tilda Swinton VR Doc ‘Impulse: Playing With Reality’

-

Business2 years ago

Business2 years agoSaudi Arabia’s Model for Sustainable Aviation Practices

-

Business2 years ago

Business2 years agoRecent Developments in Small Business Taxes

-

Home Improvement1 year ago

Home Improvement1 year agoEffective Drain Cleaning: A Key to a Healthy Plumbing System

-

Politics2 years ago

Politics2 years agoWho was Ebrahim Raisi and his status in Iranian Politics?

-

Sports2 years ago

Sports2 years agoChina’s Historic Olympic Victory Sparks National Pride Amid Controversy

-

Business2 years ago

Business2 years agoCarrectly: Revolutionizing Car Care in Chicago

-

Sports2 years ago

Sports2 years agoKeely Hodgkinson Wins Britain’s First Athletics Gold at Paris Olympics in 800m