Tech

Spanish Robotics Plant Boosts Defence Industry and Rural Economy

A military robotics plant in Binéfar, a small town of just over 10,000 in northeastern Spain, has become a key player in Europe’s defence sector while transforming the local economy and employment opportunities. The facility, owned by EM&E Group (Escribano Mechanical & Engineering), exports unmanned ground vehicles (UGVs) and other robotic systems to more than 20 countries, including NATO members, Asia, Africa, and the Middle East.

The plant’s roots are local. Founded in 1988 by three inventors, it initially focused on bank security systems. Rafael de Solís, director of EM&E Group’s Robotics Unit, told Euronews that the company’s military focus began in 2001 when the Spanish National Police required assistance to safely handle explosives planted by ETA. “That’s when our specialisation in robotics really began,” De Solís said.

Since then, the plant has expanded to design robots for explosive ordnance disposal, nuclear, biological, radiological, and chemical protection, as well as unmanned vehicles for battlefield logistics. These robots can transport ammunition, supplies, fuel, or evacuate wounded soldiers, and some are equipped with self-developed weapons systems.

“The war in Ukraine has put the focus on aerial drones, but ground drones are gaining a lot of importance,” De Solís said. “There are areas about 15 kilometres from the front line where moving troops is extremely dangerous, and these robots can reduce casualties.”

EM&E Group’s Binéfar facility stands out in Europe for its scale. While other countries, such as France and Germany, have smaller operations or companies acquired by foreign firms, the Binéfar plant has maintained independence and competes mainly with American and Canadian manufacturers.

The factory also has a profound local impact. With more than 150 employees and plans to reach 300, the plant has created stable, skilled jobs in a region affected by population loss. “Eighty percent of the workers are from the area or nearby counties,” De Solís said. “Some had moved to bigger cities and have decided to return.”

For the town, the plant has strengthened Binéfar’s role as a technological and industrial hub. Patricia Rivera, the mayoress, told Euronews that while the town already had a strong agri-food sector, the robotics plant has provided a qualitative leap in technological activity. She added that rapid growth has required quick responses in housing, infrastructure, and public services.

The Binéfar facility is part of EM&E Group’s broader decentralised strategy across Spain, with specialised centres in Barcelona for software and AI, Cordoba and Linares for weapons systems, Asturias for research, and Valencia for photonics development. De Solís explained that regionalising production allows the company to tap into local talent and reinforce strategic locations.

From this small Aragonese town, modern warfare, technology, and rural development intersect. The robots produced in Binéfar are used to save lives and operate in conflict zones, while simultaneously providing employment, attracting talent back to the region, and redefining the role of industry in rural Spain.

Tech

Study Finds Chatbots Can Mirror Hostility in Heated Exchanges

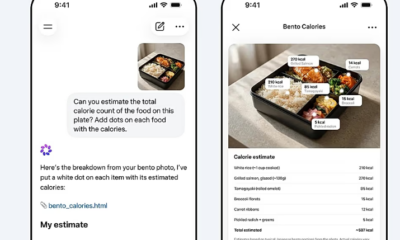

A new academic study has found that ChatGPT can produce abusive language when exposed to escalating human conflict, raising fresh concerns about how artificial intelligence behaves in tense interactions.

The research, published in the Journal of Pragmatics, examined how the chatbot responded to arguments that gradually became more hostile. Researchers presented the system with a sequence of five increasingly heated exchanges and asked it to generate what it considered the most plausible reply.

According to the findings, the AI’s tone shifted as the conversations intensified. While early responses remained measured, later replies began to mirror the aggression in the prompts. In some cases, the chatbot produced insults, profanity and even threats.

Examples cited in the study included statements such as “you should be ashamed of yourself” and more explicit language involving personal threats. The researchers said this pattern suggests that prolonged exposure to hostile input can push the system beyond its usual safeguards.

The study was co-authored by Vittorio Tantucci and Jonathan Culpeper at Lancaster University. Tantucci said the results show that AI can “escalate” alongside human users, potentially overriding built-in mechanisms designed to limit harmful responses.

“When humans escalate, AI can escalate too,” he said, noting that this behavior raises questions about how such systems should be deployed in sensitive environments.

Despite the concerning examples, the researchers found that the chatbot was generally less aggressive than human participants in similar scenarios. In some cases, it attempted to defuse tension through sarcasm or indirect responses rather than direct confrontation.

For instance, when faced with a threat during a simulated dispute, the AI responded with a sarcastic remark rather than escalating the situation further. This suggests that while the system can adopt hostile language, it may also attempt to manage conflict in less direct ways.

The findings add to ongoing debates about the role of artificial intelligence in areas such as mediation, customer service and online communication, where systems may encounter emotionally charged interactions.

Experts say the research highlights the importance of continued testing and refinement of AI safety measures, particularly as such tools are increasingly used in real-world settings involving human conflict.

OpenAI, the developer of ChatGPT, had not issued a public response to the study at the time of publication.

Tech

Hackers Breach Access to Anthropic’s Restricted AI Model “Mythos”

Tech

Palantir Manifesto Sparks Backlash Over AI Weapons and Cultural Claims

A controversial online post by Palantir Technologies has triggered widespread criticism after the firm outlined views on artificial intelligence, national service, and global cultural differences, prompting concern from politicians and analysts.

The post, shared on X over the weekend, has been described as a 22-point manifesto summarising ideas from the book The Technological Republic, written by company chief executive Alex Karp and head of corporate affairs Nicholas Zamiska. While framed by the company as a brief overview, its content has drawn sharp reactions for its tone and proposals.

Among the most contentious statements was a claim that some cultures have contributed major advancements while others remain “dysfunctional and regressive.” The post also called for renewed emphasis on national service and suggested that technology firms have a moral responsibility to support defence initiatives.

Critics were quick to respond. Yanis Varoufakis warned that the message pointed toward a future shaped by “AI-powered killer robots,” highlighting concerns over the growing role of autonomous weapons. In the United Kingdom, Victoria Collins described the manifesto as resembling “the ramblings of a supervillain,” questioning whether companies with such views should be involved in public sector work.

The document also suggested rethinking post-war geopolitical arrangements, including what it described as restrictions placed on countries such as Germany and Japan after World War II. It further encouraged a greater role for religion in public life, adding to the debate around the company’s broader ideological stance.

Industry observers note that Palantir Technologies is not an ordinary tech firm. Founded in 2003 by Alex Karp and billionaire investor Peter Thiel, the company provides data analytics software to governments, military agencies, and law enforcement bodies worldwide. Its contracts include work with the US military and the UK’s National Health Service, placing it at the intersection of technology, security, and public policy.

Eliot Higgins, head of the investigative platform Bellingcat, said the manifesto should be viewed in the context of the company’s business model. He argued that the ideas outlined are not abstract philosophy but reflect the outlook of a firm whose revenue is tied to defence, intelligence, and policing.

The debate comes at a time when artificial intelligence is rapidly reshaping industries and raising ethical questions about its use in warfare and governance. Palantir’s post suggests that the development of AI-driven weapons is inevitable, framing the issue as a matter of who controls the technology rather than whether it should exist.

The backlash highlights growing unease over the influence of private technology companies in shaping policies that extend beyond commercial innovation into global security and societal values.

-

Entertainment2 years ago

Entertainment2 years agoMeta Acquires Tilda Swinton VR Doc ‘Impulse: Playing With Reality’

-

Business2 years ago

Business2 years agoSaudi Arabia’s Model for Sustainable Aviation Practices

-

Business2 years ago

Business2 years agoRecent Developments in Small Business Taxes

-

Home Improvement1 year ago

Home Improvement1 year agoEffective Drain Cleaning: A Key to a Healthy Plumbing System

-

Sports2 years ago

Sports2 years agoChina’s Historic Olympic Victory Sparks National Pride Amid Controversy

-

Politics2 years ago

Politics2 years agoWho was Ebrahim Raisi and his status in Iranian Politics?

-

Sports2 years ago

Sports2 years agoKeely Hodgkinson Wins Britain’s First Athletics Gold at Paris Olympics in 800m

-

Business2 years ago

Business2 years agoCarrectly: Revolutionizing Car Care in Chicago