Tech

Cybersecurity Experts Warn of Risks in AI Caricature Trend

The latest AI-generated caricature trend, in which users upload images to chatbots like ChatGPT, could pose serious security risks, cybersecurity experts have warned. Images uploaded to AI chatbots could be retained for an unknown amount of time and, if in the wrong hands, could lead to impersonation, scams, and fake social media accounts.

The trend invites users to submit photos of themselves, sometimes alongside company logos or job details, and ask AI systems to create colorful caricatures based on what the chatbot “knows” about them. While the results can be entertaining, experts caution that sharing these images can reveal far more than participants realise.

“You are doing fraudsters’ work for them — giving them a visual representation of who you are,” said Bob Long, vice-president at age authentication company Daon. He added that the trend’s wording alone raises concerns, suggesting it could have been “intentionally started by a fraudster looking to make the job easy.”

When an image is uploaded, AI systems process it to extract data such as a person’s emotions, surroundings, or potentially location details, according to cybersecurity consultant Jake Moore. This information may then be stored indefinitely. Long said that uploaded images could also be used to train AI image generators as part of their datasets.

The potential consequences of data breaches are significant. Charlotte Wilson, head of enterprise at Israeli cybersecurity firm Check Point, said that if sensitive images fall into the wrong hands, criminals could use them to create realistic AI deepfakes, run scams, or establish fake social media accounts. “Selfies help criminals move from generic scams to personalised, high-conviction impersonation,” she said.

OpenAI’s privacy policy states that images may be used to improve the model, including training it. ChatGPT clarified that this does not mean every uploaded photo is stored in a public database, but patterns from user content may be used to refine how the system generates images.

Experts emphasise precautions for those wishing to participate. Wilson advised avoiding images that reveal identifying details. “Crop tightly, keep the background plain, and do not include badges, uniforms, work lanyards, location clues or anything that ties you to an employer or a routine,” she said. She also recommended avoiding personal information in prompts, such as job titles, city, or employer.

Moore suggested reviewing privacy settings before participating. OpenAI allows users to opt out of AI training for uploaded content via a privacy portal, and users can also disable text-based training by turning off the “improve the model for everyone” option. Under EU law, users can request the deletion of personal data, though OpenAI may retain some information to address security, fraud, and abuse concerns.

As AI trends continue to gain popularity, experts caution that even seemingly harmless images can carry significant risks. Proper precautions and awareness are essential for users to protect their personal information while engaging with new AI technologies.

Tech

Siemens and Nvidia Test Humanoid Robot on Factory Floor in Push for AI-Driven Production

Tech

Study Finds AI Use May Weaken Basic Problem-Solving Skills

Tech

Meta Launches Muse Spark, Its First Major AI Model in Nine Months

Meta has unveiled its first major AI model in nine months, following a $14.3 billion (€12.24 billion) investment spree and executive hiring push to rival OpenAI and Google. The American tech company introduced the model, called Muse Spark, on Wednesday, claiming it is faster and smarter than its previous technologies.

The company, founded by Mark Zuckerberg, invested $14.3 billion in Scale AI in June 2025 and recruited its CEO and co-founder, Alexandr Wang, to oversee Meta Superintelligence Labs, which houses teams working on foundational AI models. Zuckerberg also embarked on a hiring campaign, bringing in executives from competitors including OpenAI, Anthropic, and Google.

In a blog post, Meta said, “Over the last nine months, Meta Superintelligence Labs rebuilt our AI stack from the ground up, moving faster than any development cycle we have run before. This initial model is small and fast by design, yet capable enough to reason through complex questions in science, math, and health. It is a powerful foundation, and the next generation is already in development.”

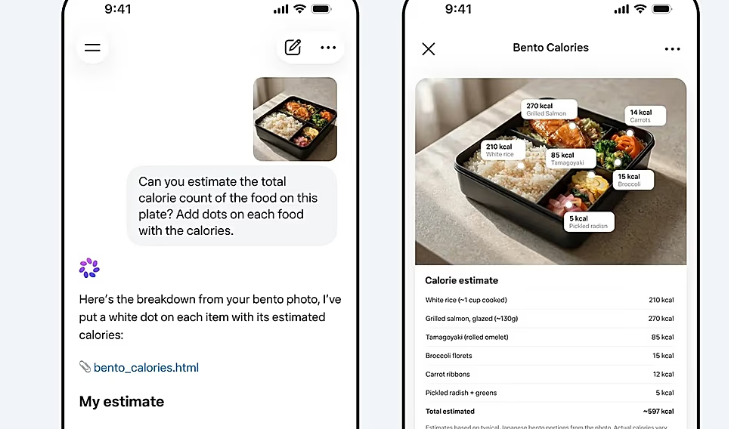

Muse Spark is positioned as a significant upgrade over Meta’s last major release, Llama 4, launched in April 2025. The company highlighted that the model excels in advanced reasoning, particularly in scientific, mathematical, and medical queries. To improve its health advice capabilities, Meta worked with over 1,000 physicians to curate training data, aiming for more accurate and comprehensive responses.

The AI model will power the company’s digital assistant in the Meta AI app and website, with planned integration across Facebook, Instagram, WhatsApp, Messenger, and the Ray-Ban Meta AI glasses. A “contemplating mode” will gradually roll out, allowing multiple AI agents to reason in parallel on complex tasks. Meta’s technical blog noted this feature is designed to compete with high-level reasoning in models such as Gemini Deep Think and GPT Pro.

Zuckerberg emphasized on social media that Meta aims to build AI products that “don’t just answer your questions but act as agents that do things for you.” Unlike conventional chatbots, these AI agents operate autonomously, gathering information based on user preferences to assist without direct human commands.

One notable shift for Meta is the move away from open-source AI models. Unlike earlier releases, Muse Spark is not available for public download, meaning access to the technology is currently restricted. The company said the model is initially available only in the United States.

Muse Spark underscores Meta’s aggressive push into the competitive AI market, combining extensive investment, executive recruitment, and technical innovation to challenge the dominance of established players like OpenAI and Google.

-

Entertainment2 years ago

Entertainment2 years agoMeta Acquires Tilda Swinton VR Doc ‘Impulse: Playing With Reality’

-

Business2 years ago

Business2 years agoSaudi Arabia’s Model for Sustainable Aviation Practices

-

Business2 years ago

Business2 years agoRecent Developments in Small Business Taxes

-

Home Improvement1 year ago

Home Improvement1 year agoEffective Drain Cleaning: A Key to a Healthy Plumbing System

-

Sports2 years ago

Sports2 years agoChina’s Historic Olympic Victory Sparks National Pride Amid Controversy

-

Politics2 years ago

Politics2 years agoWho was Ebrahim Raisi and his status in Iranian Politics?

-

Sports2 years ago

Sports2 years agoKeely Hodgkinson Wins Britain’s First Athletics Gold at Paris Olympics in 800m

-

Business2 years ago

Business2 years agoCarrectly: Revolutionizing Car Care in Chicago